You may experience these symptoms

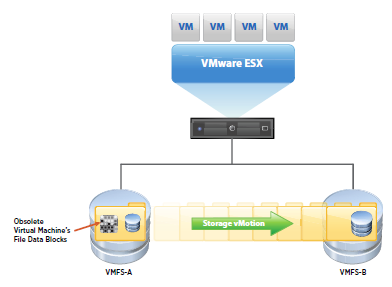

- Storage vMotion fails

- The Storage vMotion operation fails with a timeout between 5-10% or 90-95% complete

- On ESX 4.1 you may see the errors:

Hostd Log

v ix: [7196 foundryVM.c:10177]: Error VIX_E_INVALID_ARG in VixVM_CancelOps(): One of the parameters was invalid ‘vm:/vmfs/volumes/4e417019-4a3c4130-ed96-a4badb51cd0a/Mail02/Mail02.vmx’ opID=9BED9F06-000002BE-9d] Failed to unset VM medatadata: FileIO error: Could not find file : /vmfs/volumes/4e417019-4a3c4130-ed96-a4badb51cd0a/Mail02/Mail02-aux.xml.tmp.

vmkernel: 114:03:25:51.489 cpu0:4100)WARNING: FSR: 690: 1313159068180024 S: Maximum switchover time (100 seconds) reached. Failing migration; VM should resume on source.

vmkernel: 114:03:25:51.489 cpu2:10561)WARNING: FSR: 3281: 1313159068180024 D: The migration exceeded the maximum switchover time of 100 second(s). ESX has preemptively failed the migration to allow the VM to continue running on the source host.

vmkernel: 114:03:25:51.489 cpu2:10561)WARNING: Migrate: 296: 1313159068180024 D: Failed: Maximum switchover time for migration exceeded(0xbad0109) @0x41800f61cee2

vCenter Log

[yyyy-mm-dd hh:mm:ss.nnn tttt error ‘App’] [MIGRATE] (migrateidentifier) vMotion failed: vmodl.fault.SystemError

[yyyy-mm-dd hh:mm:ss.nnn tttt verbose ‘App’] [VpxVmomi] Throw vmodl.fault.SystemError with:

(vmodl.fault.SystemError) {

dynamicType = ,

reason = “Source detected that destination failed to resume.”,

msg = “A general system error occurred: Source detected that destination failed to resume.”

Resolution

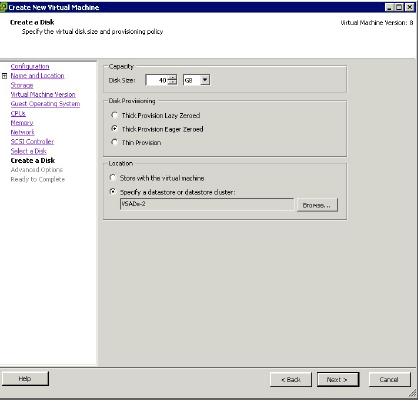

Note: A virtual machine with many virtual disks might be unable to complete a migration with Storage vMotion. The Storage vMotion process requires time to open, close, and process disks during the final copy phase. Storage vMotion migration of virtual machines with many disks might timeout because of this per-disk overhead.

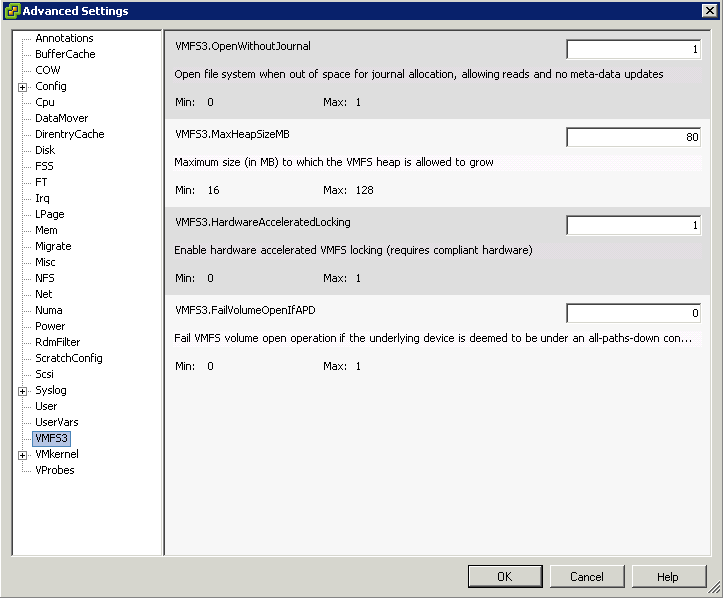

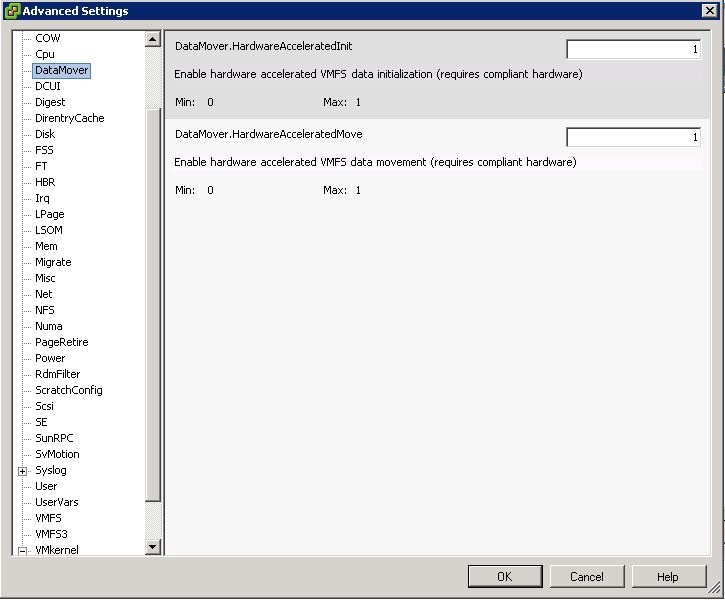

This timeout occurs when the maximum amount of time for switchover to the destination is exceeded. This may occur if there are a large number of provisioning, migration, or power operations occurring on the same datastore as the Storage vMotion. The virtual machine’s disk files are reopened during this time, so disk performance issues or large numbers of disks may lead to timeouts.

To modify the fsr.maxSwitchoverSeconds option using the vSphere Client:

- Open vSphere Client and connect to the ESX/ESXi host or to vCenter Server.

- Locate the virtual machine in the inventory.

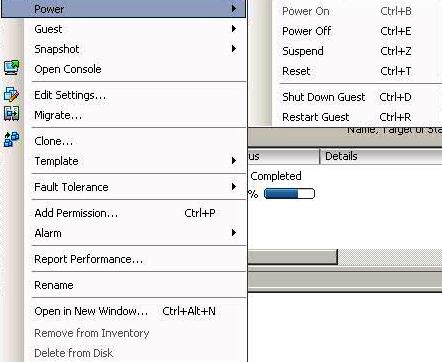

- Power off the virtual machine.

- Right-click the virtual machine and click Edit Settings.

- Click the Options tab.

- Select the Advanced: General section.

- Click the Configuration Parameters button.

- From the Configuration Parameters window, click Add Row

- In the Name field, enter the parameter name: fsr.maxSwitchoverSeconds

- In the Value field, enter the new timeout value in seconds (for example:300

- Click the OK buttons twice to save the configuration change.

- Power on the VM

The virtual machine’s configuration file can be manually edited to add or modify the option. Add the option on its own line fsr.maxSwitchoverSeconds = 300

Note: To edit a virtual machines configuration file you will need to power off the virtual machine, remove it from Inventory, make the changes to the vmx file, add the virtual machine back to inventory, and power the virtual machine on again.