Tuning Configuration

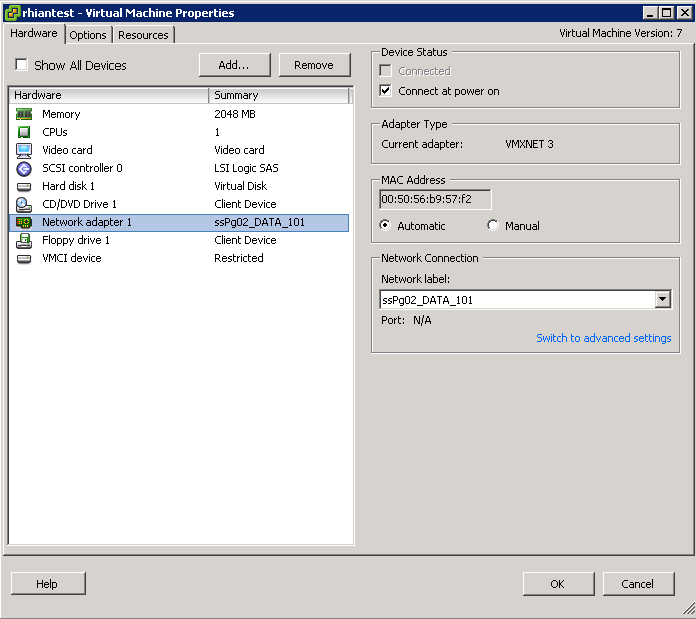

- Use the correct virtual hardware for the VM O/S

- Use paravirtual hardware for I/O intensive applications

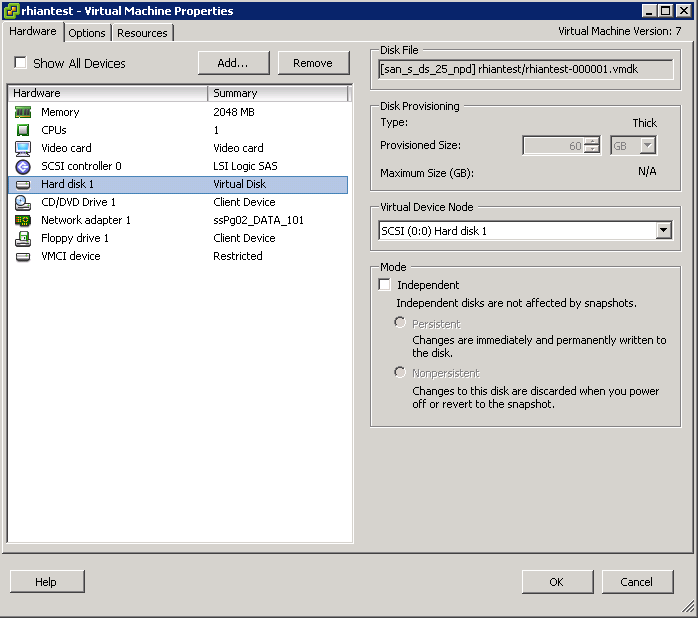

- LSI Logic SAS for newer O/S’s

- Size the Guest O/S Queue depth appropriately

- Make sure Guest O/S partitions are aligned

- Know what Disk provisioning policies are best. Thick provision lazy zeroed (default), Thick provision eager zeroed and Thin provision.

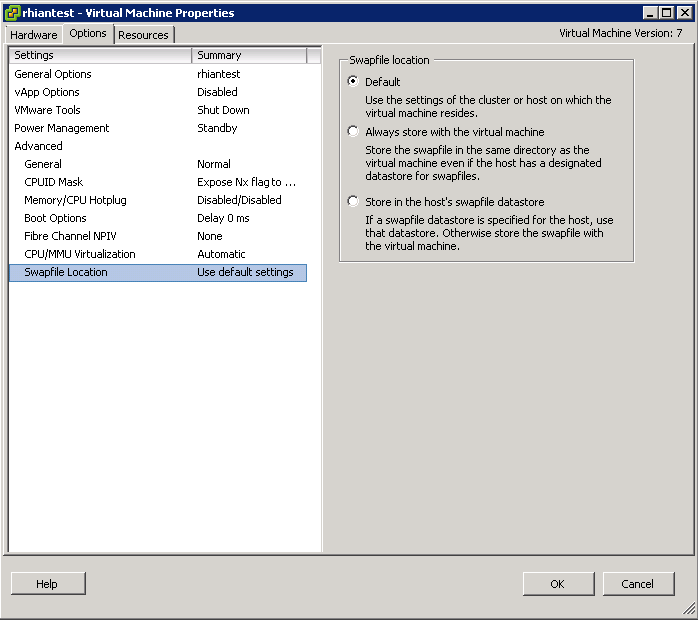

- Store swap file on a fast or SSD Datastore

- When deploying a virtual machine, an administrator has a choice between three virtual disk modes. For optimal performance, independent persistent is the best choice. The virtual disk mode can be modified when the VM is powered off.

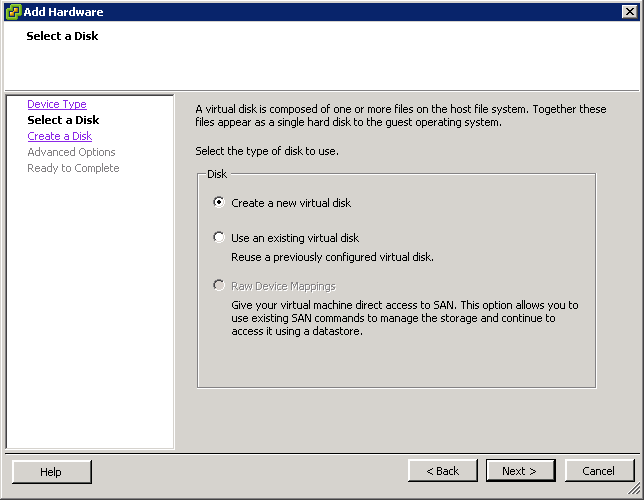

- Choose VMFS or RDM Disks to use. RDM Disk generally used by clustering software.

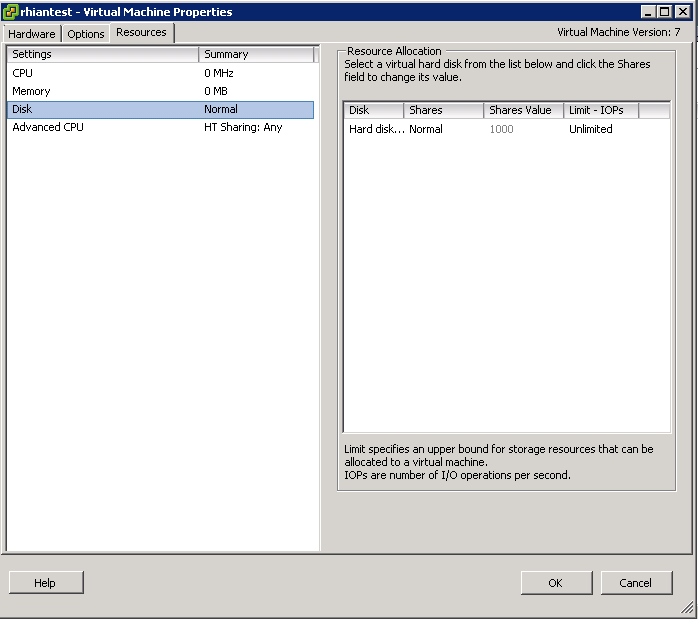

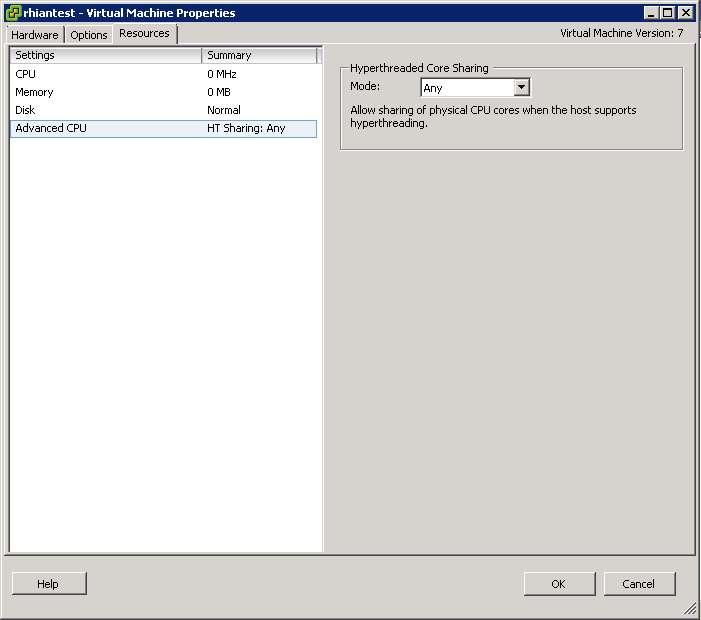

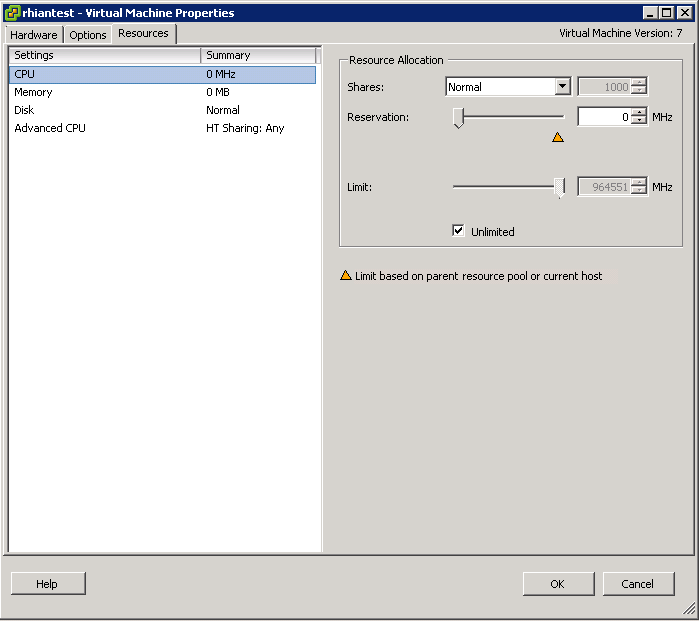

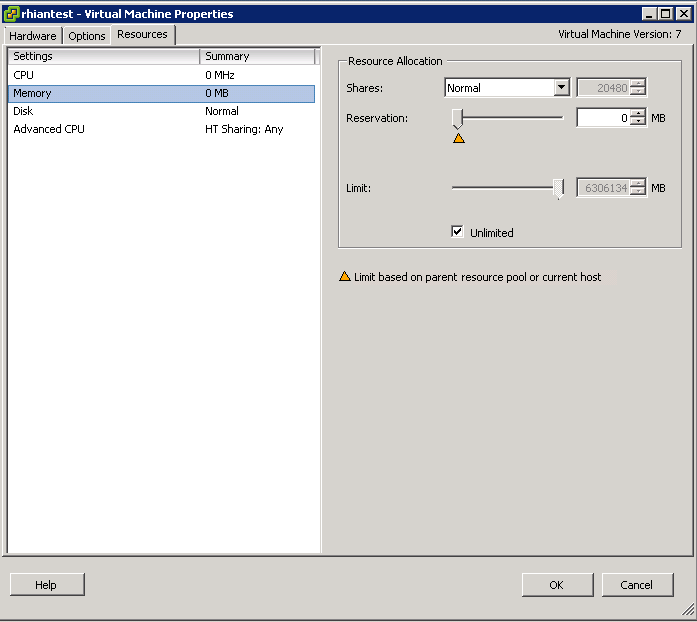

- Use Disk Shares to configure more fined grained resource control

- In some cases large I/O requests issued by applications can be split by the guest storage driver. Changing the VMs registry settings to issue larger block sizes can eliminate this splitting thus enhancing performance. See http://kb.vmware.com/kb/9645697